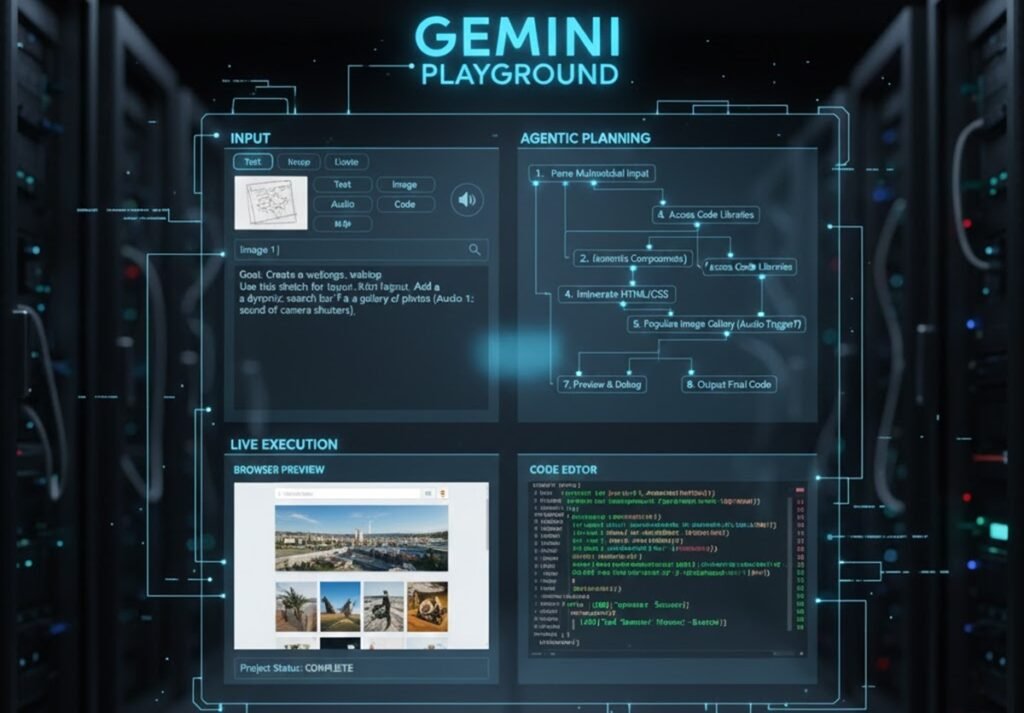

Google’s rapid advancements in AI have redefined what we expect from intelligent systems. But one of the most transformative innovations of 2025 is the Gemini Playground, a space where users can interact with Gemini multimodal AI in its most powerful form. Unlike traditional chatbot-style interfaces, this environment gives Gemini the ability to reason across text, images, videos, audio inputs, and structured data — and even take agentic actions to complete tasks on behalf of the user.

This shift is not just about better prompts. It represents a new era where AI becomes a multi-skilled digital collaborator capable of understanding context, predicting user intent, and autonomously completing workflows.

In this article, we’ll explore how the Gemini Playground works, why multimodal reasoning is so groundbreaking, and what makes agentic AI the next major leap in productivity and automation.

What Makes Gemini Playground Different?

Gemini Playground is Google’s dedicated testing and interaction platform that showcases the full potential of the Gemini model family. While most platforms offer text-based interactions, Gemini Playground expands capabilities with:

- Image analysis & visual reasoning

- Video frame understanding

- Audio-to-text & contextual interpretation

- Document parsing with structure awareness

- Tool integration & API execution

- Action-taking abilities through agentic workflows

The result is a flexible, highly intuitive environment that mimics how humans process multiple forms of information at once.

The Power of Multimodal Reasoning

The core breakthrough behind Gemini multimodal AI is its ability to ingest and understand multiple formats simultaneously. Instead of needing separate tools for text, image recognition, video analysis, and data processing, Gemini unifies them into a single model.

Examples of Multimodal Capabilities:

1. Text + Image Understanding

Upload a photo of a whiteboard diagram and ask Gemini to convert it into a detailed project plan. It not only reads the text but understands shapes, flowcharts, and relationships.

2. Video Breakdown

Provide a video clip and ask Gemini to summarize scenes, identify objects, or extract timelines — a huge advantage for educators, analysts, and creators.

3. Audio Interpretation

From meeting recordings to podcast segments, Gemini can extract key points, action items, and even emotional tone.

4. Document-Level Intelligence

Gemini Playground lets users upload PDFs, spreadsheets, and presentations and transform them into insights, rewritten content, or clean datasets.

Multimodal reasoning creates a single, unified “brain” that can seamlessly switch between tasks without compromising accuracy.

The Shift to Agentic AI: Doing More Than Responding

Where Gemini Playground truly stands out is its agentic capabilities — the ability for AI to take actions, not just generate answers.

Agentic AI means:

- Setting up workflows

- Triggering tools

- Querying APIs

- Automating tasks

- Solving multi-step processes

- Making decisions based on context

Example Scenarios of Agentic Reasoning

1. Research & Reporting Automation

You can ask Gemini to research a topic, gather data from documents you upload, generate a structured report, cross-verify facts, and export the final document.

2. Coding & Debugging

Gemini can read code files, identify bugs, test scenarios, and rewrite functions — entirely agentic.

3. Design Workflow Assistance

Upload sketches, reference images, or UI ideas, and Gemini can build wireframes, generate CSS, or recommend design systems.

4. Business Task Execution

From writing proposals to analyzing financial sheets, Gemini can follow clear logical steps to complete structured tasks.

Why Gemini Playground Matters for Developers?

For developers, the playground acts like a powerful sandbox environment.

Key benefits include:

- Real-time testing of prompts

- Integration with APIs and plugins

- Ability to trigger tools programmatically

- Debugging support with multimodal context

- End-to-end automation capabilities

It becomes a collaborative partner rather than a passive assistant.

Why It Matters for Non-Technical Users?

The rise of Gemini multimodal AI is especially empowering for non-coders.

Some popular use cases:

- Creating presentations from rough notes

- Summarizing long documents or case files

- Analyzing images for product quality

- Creating marketing plans

- Converting handwritten notes into structured databases

Gemini Playground helps users go from idea to execution faster than ever before.

Is This the Future of AI Interaction?

Absolutely. Multimodal, agentic systems represent the next generation of AI — intelligent, context-aware digital companions capable of working alongside humans like skilled assistants.

Gemini Playground is the clearest preview of this future. It is not just a tool; it is a platform that demonstrates how AI will:

- Understand the world in multiple dimensions

- Think more like humans

- Make informed decisions

- Convert inputs into actionable outcomes

- Automate complex multi-step processes

As industries continue to adopt AI-driven workflows, Gemini’s agentic approach could become the standard across businesses, education, healthcare, marketing, and engineering.

Conclusion

Google’s Gemini Playground highlights how fast AI is evolving beyond simple prompt-response systems. With advanced Gemini multimodal AI, users can now combine text, visuals, audio, and data in a single experience — and let the model take intelligent actions. It marks a significant shift toward agentic computing, bringing us closer to practical AI co-workers who can reason, understand, and execute tasks independently.